The Challenge

Standard LLM wrappers suffer from the "blank slate" problem, hallucinate products, and hit dead-ends on zero-result searches, trapping users in echo chambers.

The Approach

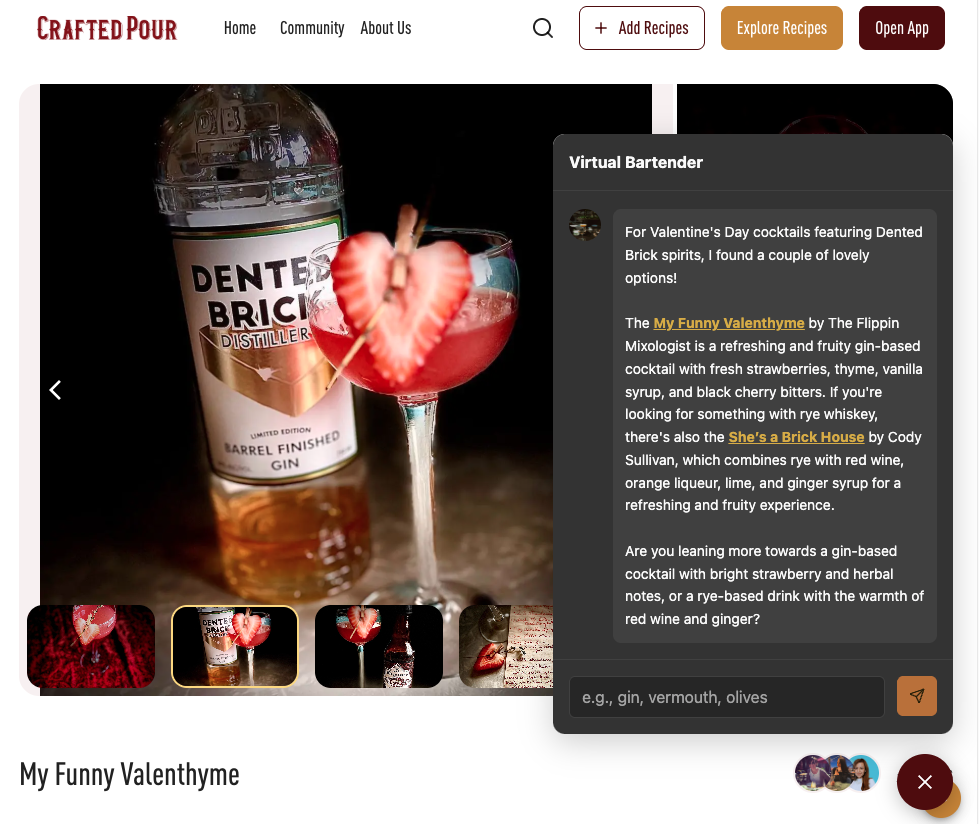

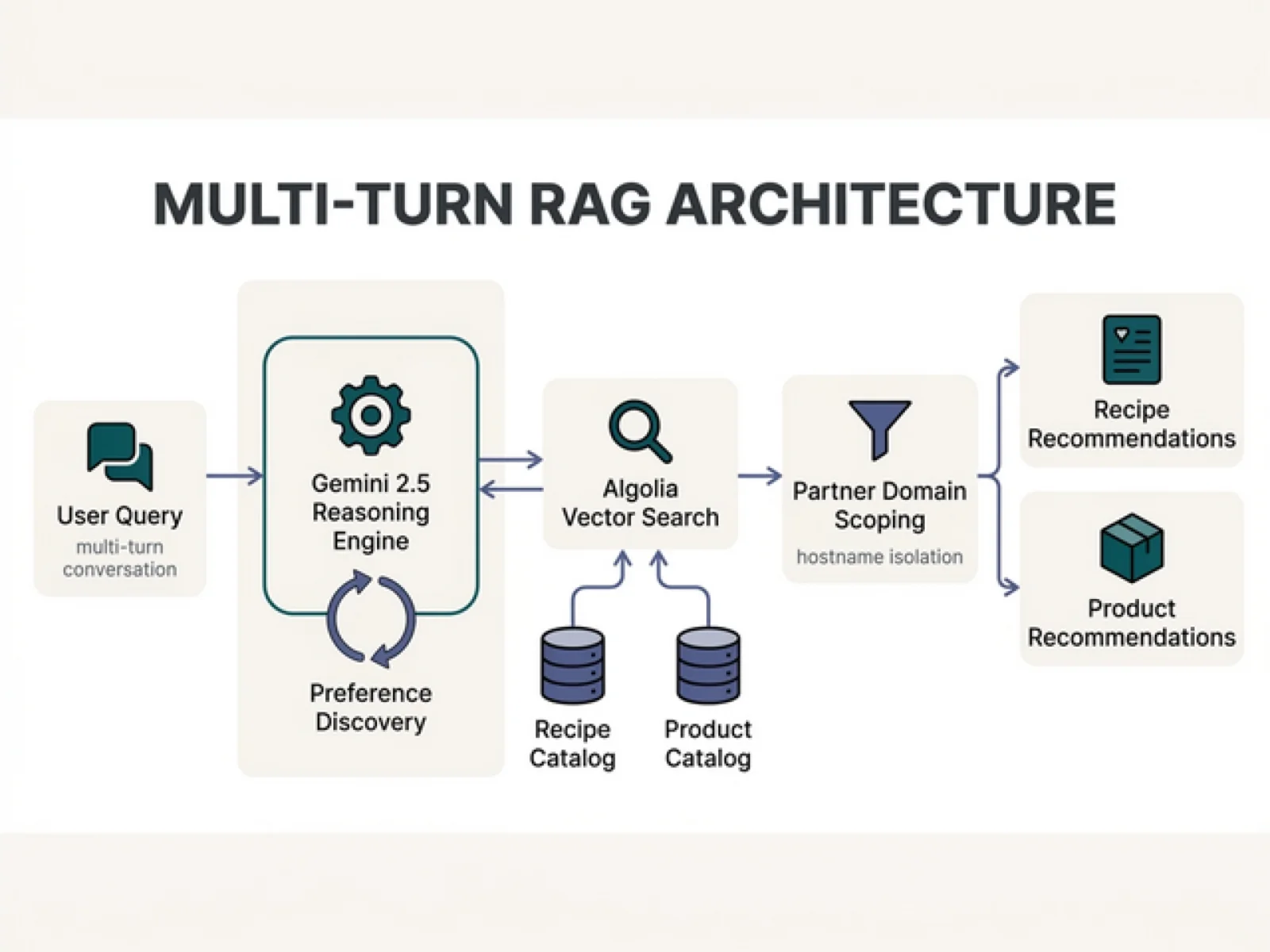

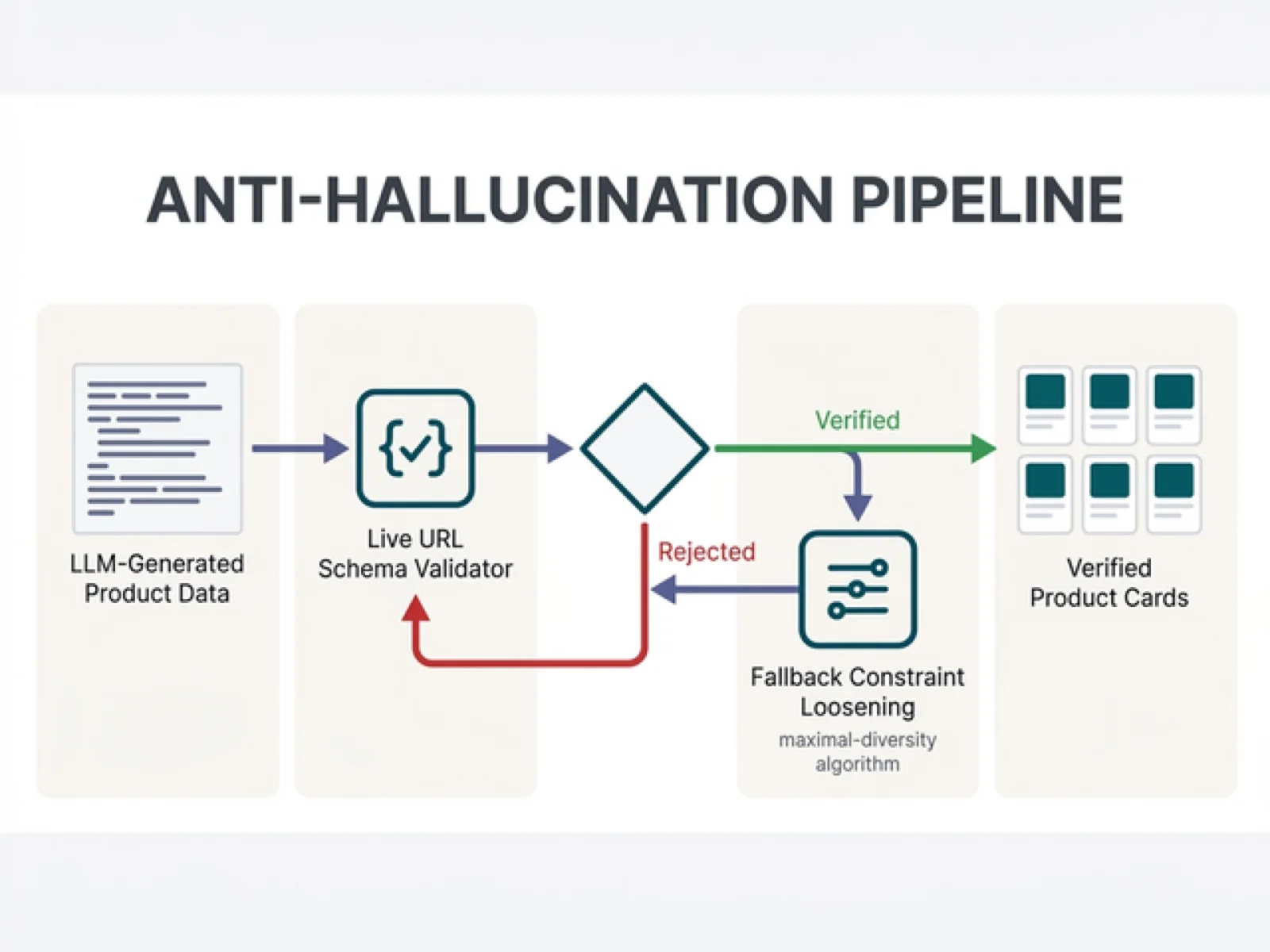

I personally built and shipped a production retrieval-grounded, multi-turn AI shopping assistant — a "Virtual Bartender" — using Gemini 2.5 + Algolia vector search/RAG + Firebase/Firestore. Instead of zero-results, the system dynamically loosens constraints to maximize recommendation diversity (leveraging my 2013 patent on serendipitous content discovery, US App. 20130232200). A fallback system preemptively builds verified URLs, eliminating hallucinated links. On partner domains, the assistant is strictly sandboxed to only recommend that partner's products via hostname → partner mapping logic.

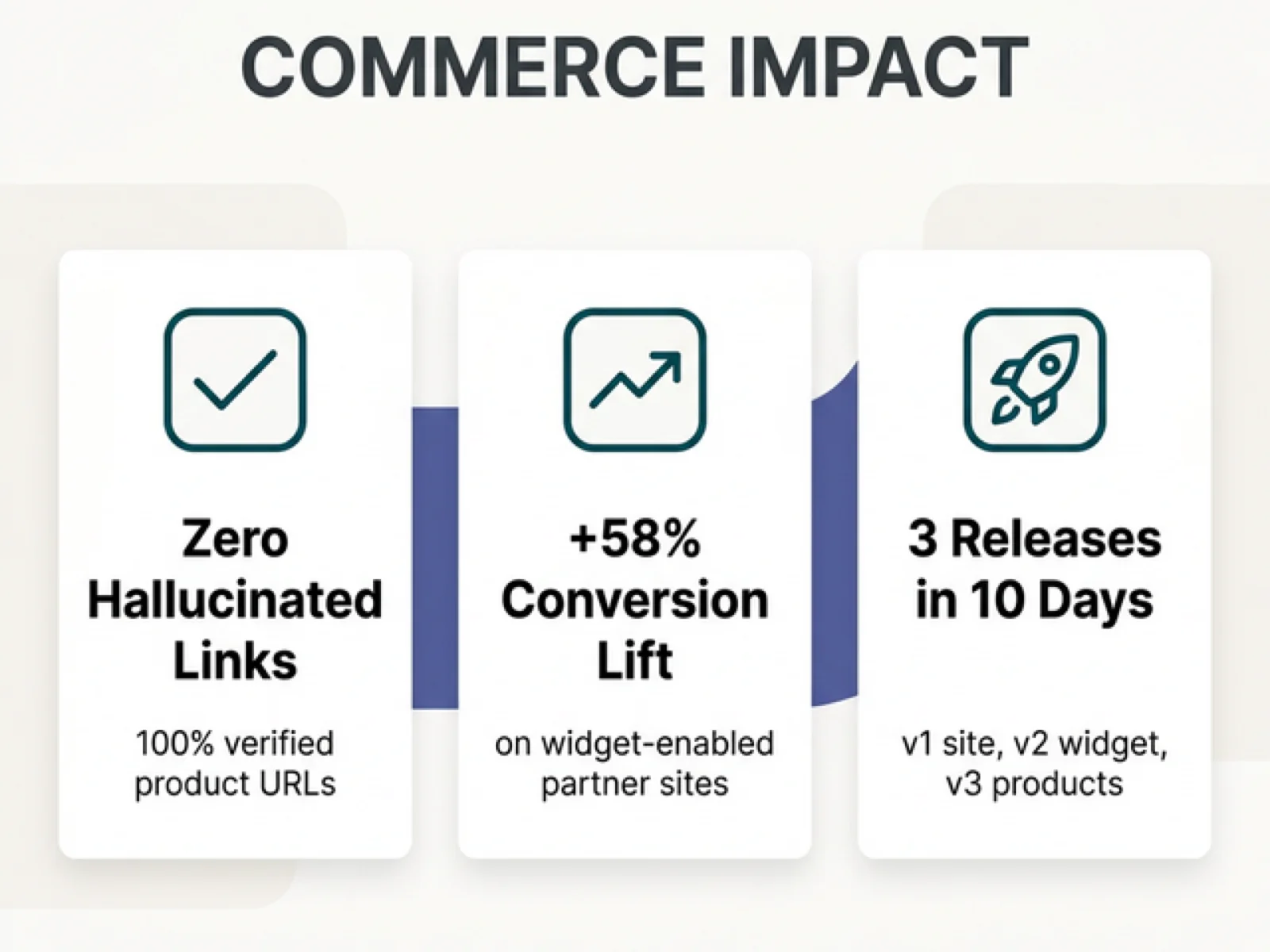

Impact

Shipped a production-grade AI platform with a zero-hallucination rate on product links. The Virtual Bartender is live across the Next.js website, Vue 3 embeddable widget, and Flutter mobile app (iOS/Android). Try it live below.